INTRODUCING MEGAPIXEL VISUAL REALITY

MEGAPIXEL VR is an innovative technology partner with numerous patents. It has won accolades from Live Design, the Emmys and the Academy Awards. Launched in 2019, its state-of-the-art HELIOS® LED Processing Platform provides the most advanced in-camera visual effects tools for virtual production, with a full 8K video pipeline, support for HDR formats and customisable, upgradeable inputs.

By leveraging AV-over-IP infrastructure, systems are highly resilient with HELIOS’ Seamless Failover mode, resulting in visually lossless failover in the event of a data interruption or frame drop. For robust operation, their patented NanoSync™ technology provides the most accurate video sync control available, down to the nanosecond, even over 10km fibre links.

HELIOS also features superior end-to-end, cinema-grade colour workflow, where metadata is always honoured to ensure colour gamut, greyscale and tone mapping. It is the most accurate in-camera workflow, reducing the time required to colour correct content footage prior to filming – and is quickly becoming the LED processor of choice for in-camera VFX productions.

1899

Netflix’s highly anticipated drama 1899, set on a migrant steamship on the high seas, recently wrapped at DARK BAY’s new virtual production stage at Studio Babelsberg in Germany. Designed by ARRI Solutions Group for Netflix, the studio is the largest permanently installed volume in Europe, with its 7x55m LED wall – and Megapixel’s HELIOS was hand-picked to power it.

“The HELIOS processors are great to work with. The many calibration features allow us to achieve the best image fidelity that our LED panels can,” says Jesse Kretschmer, technical director at DARK BAY. “The HELIOS API is also easy to work with, and with it we have been able to develop custom tools with the exact controls and information we need to keep productions running smoothly.”

Megapixel supported industry partners ARRI, Faber AV, Framestore and ROE Visual (LED panels) in the development of the studio, which will offer future customers a proven set-up for virtual production.

DARK BAY VIRTUAL PRODUCTION STUDIO

The rotating stage developed at Studio Babelsberg for the Netflix series 1899 Image Credit: Alex Forge/Netflix

COLOUR PERFORMANCE

What is shown on your grading monitor is what appears on the LED display – this allows HELIOS to offer the best end-to-end workflow in the industry

ARRI’S MIXED REALITY STUDIO

In Uxbridge, London, the HELIOS processor continues to drive large volumes. Built by ARRI in partnership with Creative Technology, the stage comprises 343 square metres of LED walls and is one of the biggest permanent mixed-reality spaces in the world. It can be programmed to display 360° imagery that, even when not in-frame, casts dynamic, fully integrated lighting effects onto actors. For this reason, HELIOS was once again selected; it can deliver a cinema-grade colour workflow and industry-leading image quality.

“In virtual production, a clean pipeline and accurate colour reproduction are essential to a great-looking result,” says Tom Burford, head of technical services at Creative Technology – which partnered with ARRI to provide the volume’s playback system. “HELIOS provides this with a very precise representation of our content. It’s exciting to see this in the ARRI stage.”

Always innovating, Megapixel has developed the newest virtual production technology, GhostFrame™. It allows for simultaneous capture of multiple video feeds hidden to the naked eye, a chroma key matte and even hidden tracking markers. It can be used via the HELIOS Processing Platform to make the impossible possible for all your virtual production, XR and broadcast needs.

PART ONE

ZERO PRODUCTION INTERFERENCE

In part one of a three-part series, we get into what makes Megapixel’s HELIOS® LED Processing Platform so production-friendly for ICVFX

LOOKING AHEAD

Future-proof your virtual production workflow with HELIOS, using existing infrastructure – while maintaining the ability to upgrade processor inputs and LED displays

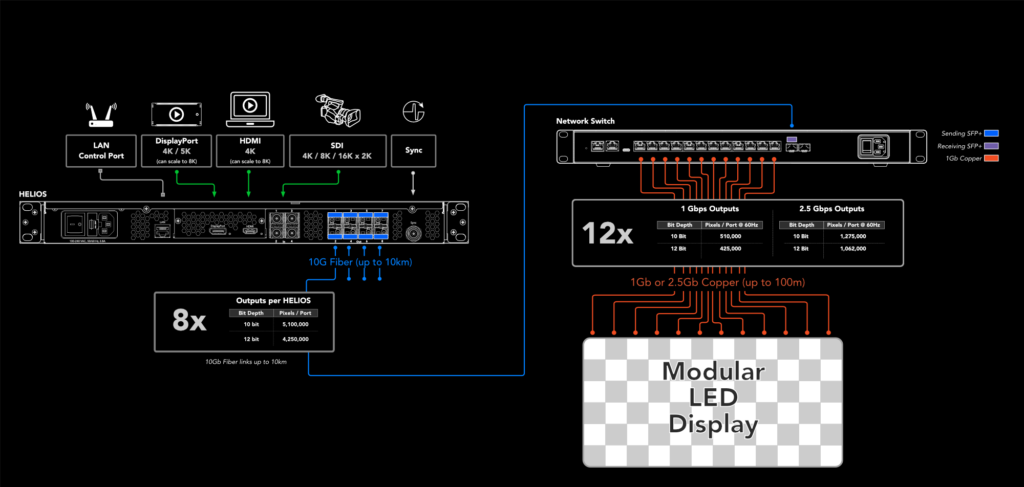

MEGAPIXEL’S PROPRIETARY PROCESSING technology extends from the video input, all the way to ultra-powerful tile- side processing. Leveraging sub-pixel calibration and off-axis colour metadata, coupled with the most advanced LED refresh algorithm, the patented technology allows for sophisticated virtual production stages. The HELIOS LED Processing Platform sits at the centre of the pipeline and features camera-friendly performance, cinema-grade colour accuracy, and a future-proof native 8K workflow via upgradeable and modular inputs.

Starting with what makes the technology so camera-friendly for DOPs, Definition will analyse the specifics over a three-part series. But first, it’s important to recognise what makes working in a virtual production volume challenging. Megapixel CEO Jeremy Hochman explains: “Cameras capture in bursts and LEDs pulse on and off – sometimes at 1000 times per second – and if that refresh of the LED is not perfectly time-aligned to what the camera is doing, you’re going to see artefacts.”

This interference pattern is called scan lines. It’s the same phenomenon that occurs when car wheels appear as if moving backwards at high speed on video. This happens because cameras don’t capture continuous footage, but rather images per second. Our brains work to fill the void by creating an illusion of continuous movement – even if it’s not in the right direction!

“It’s the single most problematic thing about pointing a camera at an LED screen, because when these artefacts occur, the only way to remove them is to rotoscope the entire scene to trace over footage and produce realistic action – and this is costly,” says Hochman. “With HELIOS, we can reduce this interference between assets and screen that’s not visible on camera. A lot of this is due to the refresh rates at which we run the panels.”

THINKING OUTSIDE THE BOX

Instead of just having a data processing box that sends information to the LED tiles, Megapixel also makes the magic happen on the tiles themselves. “We have mini processors, called PX1s, that go inside each individual tile – and then our HELIOS unit acts like a traffic cop, taking in all the incoming data and dispatching that over native fibre to go from tile to tile. What’s nice about this is that, rather than having a rack unit as your choke point processing tens of millions of pixels, it can take all that metadata and interpret it properly – giving you all the management and control to pass it along in little chunks, for the tiles to do their own processing.”

This, in turn, allows for the tiles to be run at higher refresh rates, to avoid interference. With more granularity over timings, light can be emitted from the LEDs when the camera is at full capture – and is ready to start receiving light. Using Megapixel’s groundbreaking NanoSyncTM technology, this can be done down to the nanosecond, even over 10km fibre links. “I know a lot of DOPs who’ve had a poor experience working in LED volumes – because of this interference. It doesn’t matter what LED you buy. Although it may look interesting to the eye, it’s not necessarily going to work on camera without the proper processing – and we’ve got that covered,” concludes Hochman.

Read on, as we discuss HELIOS’ cinema-grade colour accuracy, and go into detail about benefits of storing sub-pixel colour metadata on the LED module itself.

SYNCHRONISED OUTPUT

The light from the LED is timed with the video or genlock source, and offsets can be dialled in by the nanosecond – giving the best control options

PART TWO

COLOUR CHOICE

The benefits of sub-pixel color metadata on the LED module

A LIGHT IN THE DARKNESS

The HELIOS LED Processing Platform offers improved greyscale performance – and thus more accurate shadow details – compared to your traditional processing tool, as seen above

LAST TIME, WE got into the nitty-gritty of what makes HELIOS production-friendly for ICVFX and why. In part two of a three-part series exploring Megapixel’s HELIOS LED Processing platform, we break down its cinema-grade colour accuracy by delving into the inner workings.

Mini PX1 processors that fit into each LED tile deviate from the archetypal method of having a rack unit as a choke point. Instead, these allow for proper metadata management, to remove the all-too-common interference problem that occurs when pointing a camera at an LED screen. HELIOS acts as a traffic cop, taking in all the incoming data and dispatching it from tile to tile.

In-tile processing is unique to HELIOS, and it’s a technique that extends to the platform’s handling of colour metadata. Having also built cameras and lenses (and won an Emmy Award for the former), Megapixel’s engineering team understand the importance of colour accuracy and the process in which it goes from video ingest through to display output.

“A big thing we pride ourselves on is that, with every single LED tile, we develop a process with the manufacturer to capture the raw metadata of not just what the tile is doing, but each pixel,” explains Jeremy Hochman, CEO at Megapixel. “HELIOS is then, in real time, able to read back the metadata from the tile and process it in a way that is accurate and helps the user understand what is represented by the LED screen.”

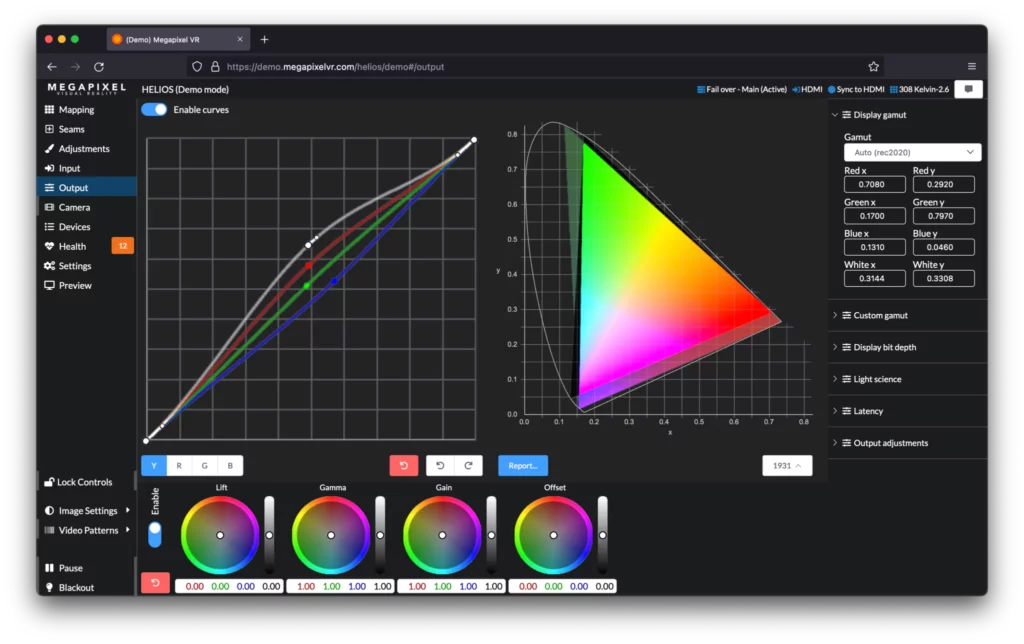

The integrity of metadata is always honoured to ensure that colour gamut, greyscale performance and tone mapping look right the first time. Or, if desired, can be tweaked and managed within the colour grading page of HELIOS. “It’s opened in a browser, so there’s no special software to download – which allows for live colour grading of the LED screen at 24-bit per colour accuracy, providing more precision than you’d traditionally expect from doing it in a render engine,” says Hochman.

THE GRADING PAGE

The purpose of real-time colour grading isn’t necessarily to style the scene, but to calibrate for how the camera is interpreting it. Out of the box, tiles are right at D65 and produce the colours they are meant to. But elements in the scene can sometimes affect what they look like.

Hochman explains: “Imagine you’re doing a process shot and there’s a bright green sports car on stage with a lot of light shining on it. That bounces back onto the LED wall, creating a green wash across the screens themselves. You need the opportunity to remove it, because you don’t want that coloured tint to affect what the camera is capturing for the volume. HELIOS allows for this correction.”

He continues: “Likewise, various camera models process colour differently. Rather than trying to tune an 8- or 10-bit video signal to make them match, you can tune the native output of the LED screen because the tech touches every single subpixel – it’s not in the upstream equipment.”

The grading interface also has a report button, which gives users a tristimulus colour coordinate value of every single tile – and, because it’s in real time, you can see exactly what the wall and each of the pixels are doing in the entire system. From this, the x and y values of RGB may be obtained, and used for an ACEScg workflow.

In regards to colour accuracy, HELIOS provides dynamic colour gamut retargeting; you can configure the screen to process Rec. 709, Rec. 2020, DCI-P3 or custom gamuts. For more accurate shadow detail – along with separation in highlights – this results in greater greyscale performance, for spectacular images.

“We understand that, creatively, a colourist might decide they want to crush the scene and have the shadow details be incredibly black. That’s absolutely fine, but the technology should never force you into creative intent,” concludes Hochman. “We’re giving users a choice with HELIOS.”

MAKE THE GRADE

Real-time colour grading lets you tune to the camera’s performance as you work

PART THREE

RESOLUTION MATTERS

The importance of native 8K and IP-based workflows in the landscape of LED volume production

STATE OF THE ART

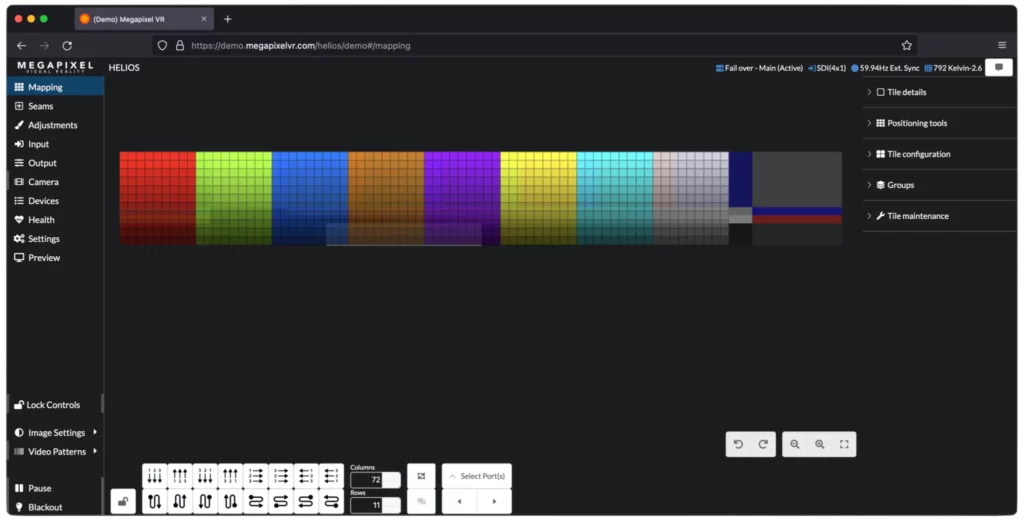

With the browser-based platform, enjoy real-time mapping and multi-user control

AS WITH ANY bleeding-edge technology, ICVFX poses certain challenges. Ensuring panels are camera-ready and managing cinema-grade colour accuracy are just a few – and in parts one and two of this series, we explored the ways the HELIOS LED Processing Platform is fulfilling these demands. This time around, we’re putting the spotlight on all-important fidelity and simplified workflows.

“One of the difficulties with LED volume is the enormous amount of pixels. With 8K, we’re looking at about 32 million of them,” begins Megapixel CEO Jeremy Hochman. “In a typical workflow, you’d have many 4K signals coming into switching equipment, routers, canvas managers and finally into LED processing. We composite those signals together inside HELIOS as an 8K, or even 16K, raster.”

With a 1RU appliance serving as a single point to channel pixel data through, crews can expect a much more resilient system. With fewer components, there’s less to maintain.

While not every volume needs the pixel capacity of 8K directly, there’s spatiality to consider. It’s important to move content around within a raster, to facilitate region of interest. Key lights or flags may need to overlap with other sections where video is displayed, for example. With volumes larger than 32-million pixels, another Megapixel processor can be added seamlessly.

“There’s a web server living within every HELIOS,” Hochman continues. “Any stacked devices talk to each other, from within the same network. You can walk into a volume with your smartphone and black out the screen, or adjust brightness, gamma and other parameters. It brings real simplicity to a very complex system.”

Hochman also notes benefits in years to come: “The system is extensible enough that when content starts to become 8K rasters, instead of lots of 4K ones, we’ll be able to ingest it in a native manner.”

Almost certainly, this day will come in line with the full adoption of the IP-based workflow. With the increasing prevalence of the SMPTE 2110 suite of standards, it doesn’t look like an all too distant future.

“Once this kind of workflow is online, creatives will be able to send us content that fits the volume exactly. They’ll send a raster that’s 16K by 3K, for example. That opens up the potential of those video payloads running on native fibre. The content server and rendering won’t necessarily have to live within a 2m cable distance of the volume itself,” says Hochman.

With HELIOS’s cloud connectivity, there’s further potential that one individual could control multiple stages from a single location. In a world where this level of expertise is spread thin, the benefit is obvious. Boots on the ground will always be needed, to physically handle LED modules and peripheral tech – but the advanced work of monitoring overall system health needn’t be done in person, across 1000 stages globally. Ultimately, it’s a step towards making ICVFX a viable means of creation for more productions.

FUTURE-PROOFING

One concern as filmmaking advances is updating hardware to meet new needs. This need not be the case with HELIOS.

“When we talk about HELIOS processing, it’s more than just the 1RU chassis – that’s just the ingest point,” Hochman explains. “That particular piece of hardware has full modularity on all inputs and outputs. Baseband inputs like DisplayPort, HDMI and 12G-SDI can all be combined as needed. We also have existing SFP inputs, for four SMPTE 2110 channels.

“With output, everything is IT fibrebased. The signal leaving HELIOS at that point is native IT infrastructure compliant. So, we are running a proprietary protocol, but it’s on standard switching hardware.”

The importance of the native SMPTE 2110 workflow cannot be overstated. In addition to facilitating refined content delivery, it provides a solve for the shortcomings of physical connections. HDMI and DisplayPort, for example, were predominantly designed for shortdistance use. They simply do not handle large-format, multi-raster signalling as well as IP-based connections.

Hochman’s outlook is a positive one.

“As the industry builds more fixed volumes, they need to be managed. IT infrastructure will make that happen. If a volume isn’t online, production is stopped. And that’s what, $50,000 a minute?” he laughs. “With HELIOS, we’re providing more potential than ever, but making the tools easier to use and troubleshoot.”

EVERYTHING YOU NEED

HELIOS simplifies the workflow process, leading to a more resilient system